Notes: PostgreSQL Concurrency Handling for Developers

One of the most common issues I see regularly while reviewing code is database queries that can cause concurrency issues. They look clean and innocent but can cause wrong data during race conditions. In this blog, I’ll try to cover some important battle-tested patterns that we should keep in mind as a developer to avoid these.

Transactions - Group Operations

Whenever you’re updating multiple things that must happen together (like transferring money between accounts), wrap them in a transaction. This makes sure that either everything happens, or nothing does, no in-between states.

BEGIN;

UPDATE accounts SET balance = balance - 100 WHERE id = 1;

UPDATE accounts SET balance = balance + 100 WHERE id = 2;

COMMIT;

Pessimistic Locking - Lock Rows When You Know There’ll Be Contention

If multiple processes might update the same row at the same time, don’t take chances. Lock it.

SELECT * FROM accounts WHERE id = 1 FOR UPDATE;

This makes the database wait until the lock is released, ensuring no two transactions change the same data simultaneously.

Optimistic Locking - Let Users Compete, But Detect Conflicts

Add a version or updated_at field, and check it when updating.

-- Read

SELECT balance, version FROM accounts WHERE id = 1;

-- Update (only if version hasn’t changed)

UPDATE accounts

SET balance = 200, version = version + 1

WHERE id = 1 AND version = 3;

If another update sneaked in and changed the version, your update won’t go through.

Atomic Updates - Don’t Read-Then-Write

Let’s say you want to add ₹100 to someone’s account. I have seen code like this many times.

SELECT balance as current_balance FROM accounts WHERE id = 1;

UPDATE accounts SET balance = current_balance + 100 WHERE id = 1;

This is wrong, NEVER do this. That’s a classic race condition waiting to happen.

Correct way to query this.

UPDATE accounts SET balance = balance + 100 WHERE id = 1;

Lock Specific Rows - Not the Whole Table

If you're locking rows (e.g. with FOR UPDATE), make sure your WHERE clause uses indexed columns like primary keys.

Right approach.

SELECT * FROM orders WHERE id = 123 FOR UPDATE;

Wrong approach.

SELECT * FROM orders WHERE status = 'pending' FOR UPDATE;

Without an index on status, PostgreSQL will scan the entire table, evaluating each row. Every matching row ('pending') gets locked.

Insert-if-not-exist with Lock or Conflict Clause

Avoid race conditions during unique inserts.

-- Postgresql

INSERT INTO users (email) VALUES ('x@example.com') ON CONFLICT DO NOTHING;

-- MySQL

INSERT IGNORE INTO users (email) VALUES ('x@example.com');

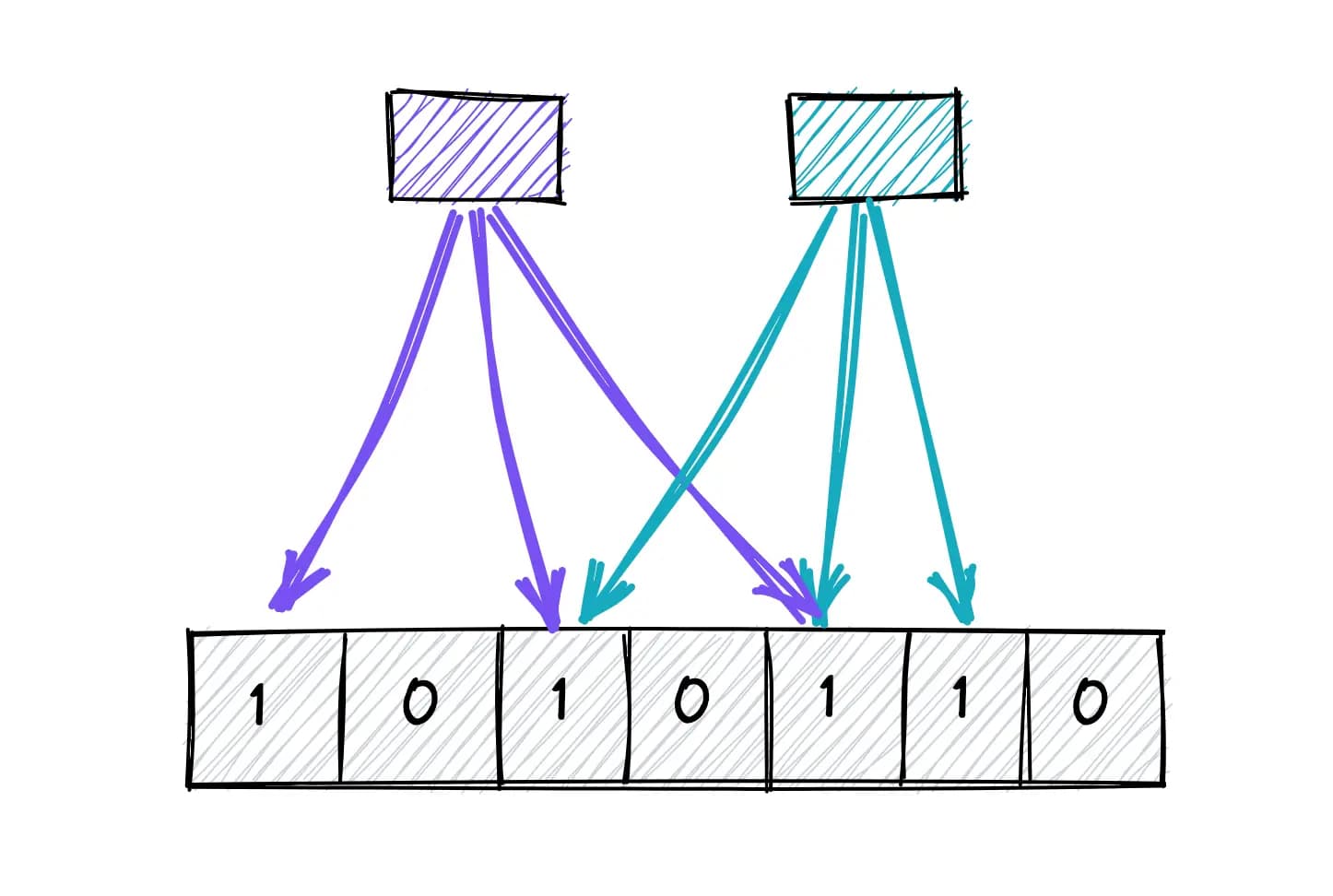

Use Queue Tables with SKIP LOCKED for Background Jobs

When building job queues or background workers, you want to make sure no two workers grab the same job.

SELECT * FROM jobs

WHERE status = 'pending'

ORDER BY created_at

LIMIT 1

FOR UPDATE SKIP LOCKED;

Here SKIP LOCKED lets other workers skip rows already locked by someone else. No waiting, no duplicate processing.

Advisory Locks

When we are running concurrent operations like processing data from database directly, it’s good to have advisory locks in place.

PostgreSQL provides Session Level - pg_try_advisory_lock (non-blocking) and pg_advisory_lock (blocking) and Transaction Level - pg_try_advisory_xact_lock (non-blocking) and pg_advisory_xact_lock (blocking) locks.

SELECT pg_advisory_lock(hashtext('daily_report'));

-- run report logic...

SELECT pg_advisory_unlock(hashtext('daily_report'));

Serializable Isolation Level

This is the highest isolation level which we can use in places where correctness is extremely important and we can compromise with performance as well for that.

SET TRANSACTION ISOLATION LEVEL SERIALIZABLE;

BEGIN;

SELECT * FROM seats WHERE available = true LIMIT 1;

-- Do some checks or process payment

UPDATE seats SET available = false WHERE id = $seat_id;

COMMIT;

In this scenario, two users might both read the same seat and attempt to book it.

With SERIALIZABLE, PostgreSQL ensures only one transaction will succeed, the other fails and must be retried.

Concurrency issues are sneaky and often hard to debug. Just follow the right patterns, and your database logic will scale cleanly, even under load.